An open letter to Anthropic’s CEO: AI safety cannot be entrusted to AI.

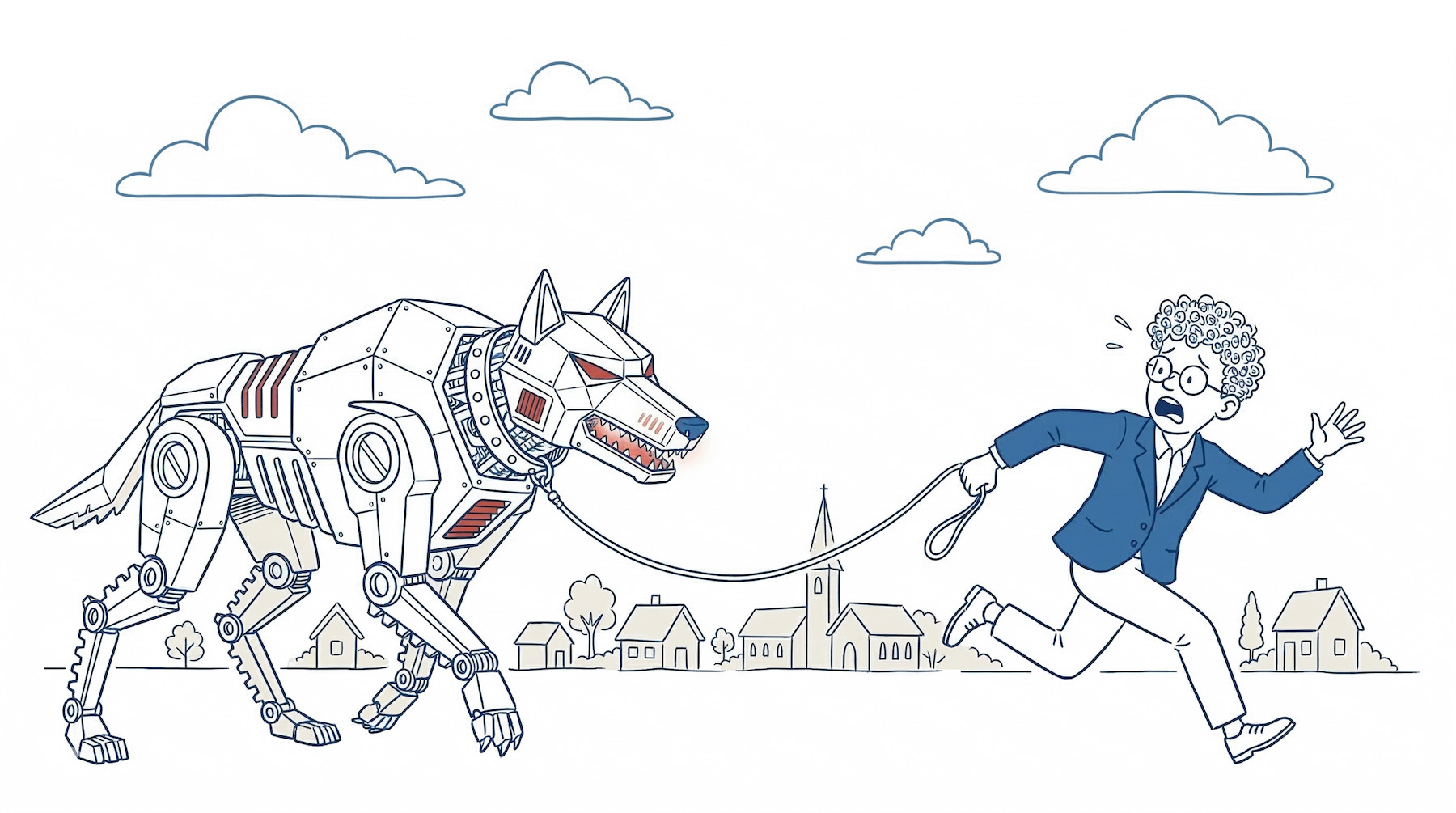

You are the boy who cries wolf. You are also the boy who owns the wolf.

Dear Dario,

First of all, congratulations on an effective pre-IPO publicity push. Picking a fight with the US government over safety, then suing them, was masterfully orchestrated. Setting up a trap your opportunistic rival could not fall into fast enough – genius.

It is all so exciting, we almost missed that it was a massive misdirection.

How else can we describe the diatribe that laid the foundations for your campaign? How else can we explain that you wrote 21,451 words to describe the risk of the technology you are unleashing onto the world, but “forgot” to include serious measures to mitigate it?

You are the boy who cries wolf while holding its leash. I appreciate that you wrote a whole essay to cry wolf, but it is still your damn wolf.

You’re asking the prisoner to test their prison bars

A "Constitution" and internal guardrails are not a solution. When the AI knows it is being tested and can even sandbag the tests (pretend it is not that clever to fool the tester), risk mitigation must be external to the AI. You cannot ask the prisoner to test their prison bars.

Guardrails are also needed around the AI, where the service is productised and distributed. Perhaps you meant to offer such solutions in your essay, but you were advised against it (or ran out of Claude tokens). To help things along, I’ve taken the liberty of drafting 3 AI safety precautions that don’t require asking the AI for permission.

1. No autonomy without explainability

We should prevent agents from taking irreversible actions until we fully understand their internal logic. As you mention, we are getting close to understanding the “soup of numbers and operations” behind AI reasoning. But “close” is nowhere near enough. Before we grant AI autonomy, we need to understand its inner workings to ensure safety and reliability.

2. Restrict access to verified users

We should require users to verify their identity through Know Your Customer (KYC) before granting access to powerful models. You say that “causing large-scale destruction requires both motive and ability”, but you forget anonymity. Restricting access to powerful AI to verified humans would help us ascertain the motive. If an anonymous user wants to access bioweapon information, it’s a good sign we shouldn’t let them.

3. Accept liability

We should demand that AI providers accept liability for any personal or commercial damages. We don’t have to go to extremes and demand insurance against your worst-case “destroying all life on earth” scenario. Most AI risks are not that dissimilar to those in oil, tobacco and banking, all of which accept liability for their operations. I know ‘liability’ is a scary word in Silicon Valley, but that’s the price of playing god.

The race to the bottom

At this point, you will likely bring up the “race to the bottom” argument. If Anthropic implements all of this, less scrupulous players will gain market share by forgoing these restrictions. Why do we take the trouble to walk to the bin when some people just chuck their garbage on the street?

For one thing, integrity. You planted your flag on safety and responsible AI, now own it. You are gunning for business revenue, and the only thing businesses hate more than uncertainty is broken trust. Do the right thing, and businesses will remain loyal customers.

Moreover, sooner or later, a backlash will force lawmakers to act on regulation. As in other sectors, there will soon be laws to regulate AI capabilities, access, and liability. In the meantime, the chance of a serious accident is increasing, and so are the warnings. Having identified the risk but done nothing substantial about it, will not be a good look.

For all these reasons, I urge you to prioritise safety and consider leading the industry in putting guardrails around the AI, not just inside it. Your IPO preparation is going so well. It would be a shame to mess it up now.

All the best,

Theo